Use Case Scenario

A TV broadcaster has a real-time video and audio feed that they live stream directly from international sporting events and wants to add live close captioning. Many viewers have challenges hearing the content due to the location that they watch the sports in such as bars, restaurants, public transport or in an office environment.

To improve the live steaming sports experience and make the content more accessible in places where viewers can enjoy their sports, there is a need to add near-real time close captions into the video stream. While adding closed captions to sports video feeds may also improve the experience for the hard-of-hearing, the primary focus/purpose is to enhance the viewing experience for general users in locations where it may be difficult or not possible to hear the audio.

- Live video and audio feed is streamed from the sports stadium to the broadcast center.

- A real-time voice recognition system that is able to produce real-time closed captions is required.

- Closed captions must have the shortest delay possible between the spoken word and the closed caption being displayed on the viewers screen.

- Online closed captioning services are not suitable for this task as they are remote and introduce latency into the voice recognition and close caption processing. An on-premise solution is a requirement.

- Multiple viewing devices such as set-top boxes, smart TVs, mobile phones and regular video feeds.

- The initial deployment is in English only with other languages intended for the future that may use real-time translation or a separate language specific commentary stream for each language.

- As the broadcast is live, special considerations need to be taken into account to handle hardware, software, or other kinds of failures, and ensure that there is appropriate redundancy in the deployment architecture.

- Live events such as sports have a lot of additional sounds that can interfere with the quality of the voice recognition. It is possible to get a clean audio channel that is separated away from stadium noises such as music, cheering and yelling.

- Any international sporting event will have a wide variety of unique or not commonly used people names, place names and team names, as well as specific vocabulary and dialog styles associated with the sport. This presents a significant challenge for voice recognition as the software will not know these names/vocabular/style resulting in errors being produced in the resulting closed caption.

- While real-time translation is out-of-scope for the initial deployment, multiple languages for both real-time closed captioning are planned in the future. Additional language specific audio streams and machine translation will be added and the system should be able to scale and expand to support these needs.

Technologies Used

Click on the link to learn more about each technology:

- Language Studio REST APIs

- Automated Speech Recognition (ASR)

- Advanced Media Processing (AMP)

- ASR Customization

- Natural Language Processing (NLP)

See Also:

Solution

Environment and Workflow

Overview

- Language Studio real-time Automated Speech Recognition (ASR) and Advanced Media Processing (AMP) features are designed specifically for this kind of use case. All the base functionality is available in the standard platform.

- The broadcaster has the following basic environment:

- Live video and audio feeds via optical fibre to the broadcast center.

- 5 second delay between the live event and the broadcast.

- A video playout system that is capable of muxing the subtitle text back into the video signal or feed. The system integrates with products such as Harmonic Spectrum X, 360 Systems TSS series.

- Language Studio Enterprise servers are installed on-premise in the Broadcast Center, nearby other servers and the Playout system to remove any unnecessary latency from network traffic and reduce any possible points of failure. (See High-Availability and Fault Tolerance section below.)

- To ensure less interference and optimal audio quality, the broadcaster was able to separate the commentary audio from the other audio (cheering and general stadium noise).. While not a requirement, being able separate the voice channel from the other sounds further enhances the quality of the voice recognition

- Language Studio Advanced Media Processing (AMP) features were configured to format the subtitles in the exact structure and format that was needed for the broadcast. This included text formatting and splitting rules that produced subtitles that felt natural and were not split in unnatural and unusual places that made reading more difficult.

- The quality of the ASR is determined in part by the amount of time that is available to process the audio stream. The ASR processing was set to produce ASR output at a maximum delay of 4.5 seconds. This is more than sufficient to provide high quality ASR.

- As there was a 5 second delay in the broadcast, 500 milliseconds was available for the process of muxing the subtitle input back into the video stream. This enabled the subtitle to appear on the screen at the exact time that it was spoken, giving the appearance of real-time voice recognition without delay in the end-user experience.

- If the use case did not permit such a delay, the maximum delay for ASR could be set to shorter times and presented on the screen 1-3 seconds after the words were spoken.

- Caption files are produced as open captions and stored with the Master Edit for later use in the VOD encoding farm.

Example Live Broadcast Workflow

ASR – Voice Recognition Customization / Domain Adaptation

Challenges

- Voice recognition software can only recognize words that it is aware of and are contained in the data used for training the AI models. New words or words in a new context that have not been seen before present challenges in the following areas:

- Sports vocabulary:

Each sport will have specific terms, vocabulary, and slang that voice recognition software is not familiar with. Examples:- Cricket: biffer, beehive, googly, carrom ball, clean bowled, daisy cutter, dead ball, doctored pitch, golden duck, leg glance, offer the light, quick single, reverse swing

- Football: back heel, center circle, consolation goal, free kick, grand slam, kick and rush, minnow, match fixing, perfect hat-trick, phantom goal, scorpion kick, scrimmage, subbed, vuvuzela

- Swimming: block, catch phrase, drafting, drag suit, false start, negative split, recovery phase, feeding station, gel pack, seek and spot, reach and roll, slip streaming.)

- People names, place names, team names, and organization names:

Each sport will have a list of names that may not be common place in everyday English vocabulary. Examples:- Players: Usain Bolt, Yuma Kagiyama, Sachin Tendulkar, Yevgeny Kafelnikov, Franz Beckenbauer

- Teams, Group or Pairs: Sunrisers Hyderabad, Brighton and Hove Albian, Evgenia Tarasova / Vladimir Morozov

- Voice recognition must be able to capture these words accurately, so must be customized to be aware of these words / phrases.

Approaches

- Glossaries:

A glossary is insufficient on its own as it only adds a word in isolation and does not include any contextual reference of when it should be used. Glossaries are absolute and introduce bias and over-fitting errors into the results. A small number of glossary names/terms can be used with a positive effect. A large number of glossary names/terms can result in an overall negative effect. Glossaries should only be used in the case of adding spot-fixes and new names that were unplanned/unexpected (i.e., a late entry of a player into the sport where a player was replaced. Glossaries are very useful in such cases and have immediate effect.)

- Customization / Domain Adaptation:

Customization / domain adaptation is the best approach that combines glossaries and information known prior to the broadcast with contextual references that enable the vocabulary and terminology to be used at the appropriate time without over-fitting and generating bad transcriptions. By providing context, the ASR software is able to determine when to use the glossary term resulting in significantly higher accuracy. Unlike glossaries, this approach does not suffer from over-fitting as the contextual data determines when to use the vocabulary.

General Process

- Client Inputs:

- Known terminology, slang, player names, place names, and team names.

- Any existing text in document forms such as HTML, Microsoft Word, Adobe PDF, etc.

- URLs of websites that have relevant context to the sport. This could include blog sites, regulatory bodies, fan sites and official sports event sites (i.e., Olympics website, Indian Premier League (IPL) Cricket official website, FIFA official website).

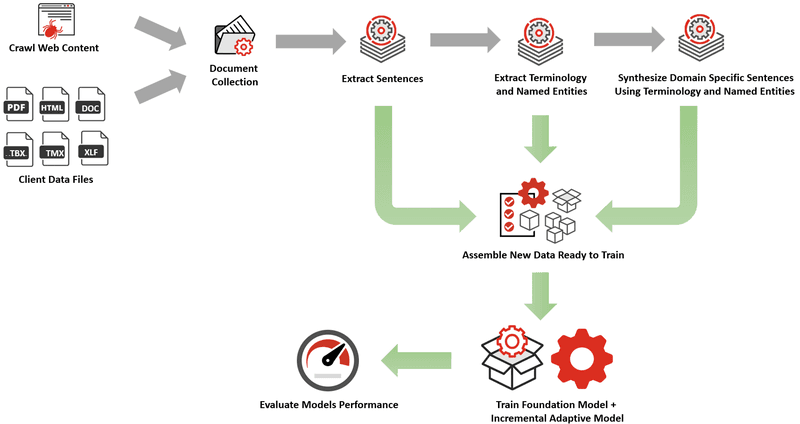

- Data Processing:

- Gather text from all the resources by automatically crawling (websites), downloading, and extracting content to text using the process automation and Natural Language Processing (NLP) tools within Language Studio.

- Extract sentences from the text. (Sentence Segmentation)

- Extract terminology and names from the text. (Terminology Extraction and Named Entity Recognition)

- Use the terminology and named entities to automatically search for new content that utilizes the same vocabulary and repeat the above process of crawling, downloading and extracting data.

- Synthesize sentences in context of the domain using large language models. This process creates hundreds of thousands of sentences that use terminology and vocabulary specific to the sport in domain specific context.

- Typical Customization/Domain Adaptation Timeline:

- Between 4-7 days from start to finish.

- For a typical sport, approximately 5-10 million sentences of contextually relevant data are produced. This process takes about 3-5 days to gather and process the data ready for training AI models.

- For a range of sports (i.e., the Olympics) the processing and associated time should be expanded/multiplied based on the number of sports.

- On completion of data gathering and training preparation processing, the Foundation Model can be trained incrementally to include the new vocabulary. This typically takes about 4 hours.

- It is important to note that some websites are deeper than others and may take longer to crawl. Crawling and processing can be very fast. However, crawl speed is managed at a lower speed so as to not overload or to appear to be attacking the website. Crawling too quickly on websites results in being blocked. As such, the timeline will vary depending on the specific sites being crawled. Example synthesized outputs can be seen below.

ASR Customization / Domain Adapaptation Workflow

Data Synthesis for ASR Domain Adaptation Examples:

Automated prompt-engineering is used to process all the terminology and vocabulary and guide the AI models to synthesize hundreds of thousands of sentences that use terminology and vocabulary specific to the sport in domain specific context. Example prompts for running and cricket are show below with a subset of results.

- And there he goes, Usain Bolt taking the lead with his signature smooth stride.

- Oh my goodness, did you see that speed? Usain Bolt just set a new world record!

- Usain Bolt is clearly in top form, he’s pulling away from the pack with ease.

- The crowd is on their feet as Usain Bolt crosses the finish line in first place.

- This is it, the moment we’ve all been waiting for, Usain Bolt is about to begin his race.

- Usain Bolt’s signature pose is a clear sign that he’s ready to take on the competition.

- Look at the grace and power in Usain Bolt’s stride, it’s no wonder he’s the fastest man in the world.

- Usain Bolt just took the lead, and it looks like he’s not going to let go any time soon.

- He’s done it again, Usain Bolt has broken another world record and set a new standard for sprinters everywhere.

- The anticipation is building as Usain Bolt prepares for the start, the excitement is palpable.

- ..

- And there he is, Sachin Tendulkar entering the field, the crowd is going wild!

- Sachin Tendulkar has just hit a stunning century, a true testament to his skill and experience.

- What a shot! Sachin Tendulkar just hit a six that cleared the stands.

- The Indian team is relying on Sachin Tendulkar to bring them back into the game.

- The bowler just delivered a full toss, and Sachin Tendulkar sent it flying over the boundary.

- Sachin Tendulkar is playing with poise and confidence, it’s a joy to watch.

- It’s clear that Sachin Tendulkar has done his homework, he’s reading the bowlers well and making the right shots.

- The crowd is on their feet as Sachin Tendulkar prepares to face the next ball.

- Sachin Tendulkar just hit a beautifully timed straight drive, a true example of his mastery of the game.

- Sachin Tendulkar has been the backbone of the Indian team for years, and he’s showing no signs of slowing down.

- …

Subtitle Formatting

Language Studio Advanced Media Processing (AMP) provides a wide range of subtitle formatting settings that can be fine-tuned to the specific needs of a broadcaster. Settings go well beyond standard features such as lines per subtitle and maximum characters per line to include reading speed, frame rates and other advanced formatting options.

Of particular importance is the AI powered splitting algorithms that ensure that subtitles are split in a natural position and displayed in a similar manner to how a human linguist would adjust and split a subtitle.

The combination of these settings ensure optimal rendering of subtitles and a better viewing / reading experience for people watching the broadcast.

Example Subtitle Formatting Settings

High-Availablility and Fault Tolerance

High Availability (HA) planning is designed to ensure system uptime, and to minimize or eliminate downtime. Live video stream has no room for system outages and each major element needs to have redundancy built in to its design and deployment plan.

- Real-time updates are not required as live sporting events will have gap periods between games or events where upgrades and system updates can be performed. This makes managing systems significantly easier than always-up systems.

- As the servers that run the Language Studio Enterprise platform are situated in the Broadcast Center they are easier to manage and control.

- As the systems processing live audio to produce subtitles in real-time, any outage or failure will be very visible. Redundant hardware that is on hot-standby and monitoring for both hardware and software performance is implemented with automated switching to the hot-standby system in the event of any kind of failure or performance degradation.

- Language Studio Enterprise provides comprehensive performance and stability metrics via Healthcheck APIs that can be leveraged by enterprise grade load-balancing and monitoring systems.

Benefits

- Due to the ASR customization being performed, player names, team names, sport-specific slag, and other vocabulary was correctly recognized by speech recognition processing.

- Due to this voice recognition optimization, fewer transcription errors were encountered which resulted in a much higher-quality user viewing experience.

- Subtitles were presented on the viewers screen at the same time they were spoken by the commentator rather than delayed after they had already been spoken.

- The primary objective of enabling viewers to be able to understand what the commentator was saying in environments where it was not possible was achieved.

- Having high-quality live closed captioning resulted in not only a better viewing experience, but also in viewers watching longer and in growth in the number of users watching the live broadcast. Growth also came from being able to reach a wider audience.