What is Neural Machine Translation (NMT)?

Neural Machine Translation (also known as Neural MT, NMT, Deep Neural Machine Translation, Deep NMT, or DNMT) is a state-of-the-art machine translation approach that utilizes neural network techniques to predict the likelihood of a set of words in sequence. This can be a text fragment, complete sentence, or with the latest advances an entire document.

NMT is a radically different approach to solving the problem of language translation and localization that uses deep neural networks and artificial intelligence to train neural models. NMT has quickly become the dominant approach to machine translation with a major transition from SMT to NMT in just 3 years. Neural Machine Translation typically produces much higher quality translations that Statistical Machine Translation approaches, with better fluency and adequacy.

Neural machine translation uses only a fraction of the memory used by the traditional Statistical Machine Translation (SMT) models. This NMT approach differs from conventional translation SMT systems as all parts of the neural translation model are trained jointly (end-to-end) to maximize the translation performance.

Unlike the traditional phrase-based translation system which consists of many small sub-components that are tuned separately, neural machine translation attempts to build and train a single, large neural network that reads a sentence and outputs a correct translation.

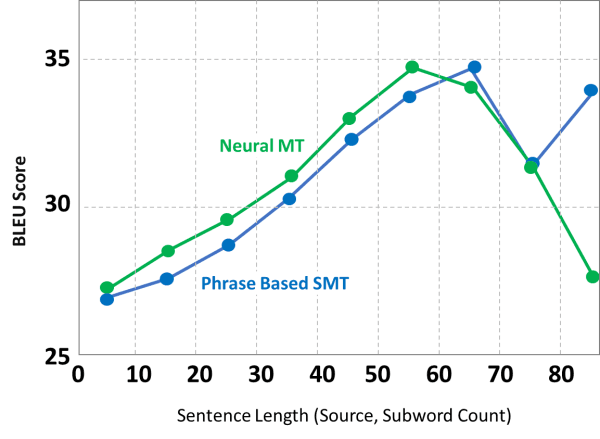

However, SMT systems should not be written off completely as there are many cases where SMT will produce a better quality translation result than NMT. For this reason, Omniscien has taken the Hybrid Machine Translation approach that integrates seamlessly the strengths of both technologies to deliver a higher quality translation output.

As can be seen in the chart above, SMT performs better in that NMT in some scenarios. Irrespective of translation technology, the highest quality translations are always produced by customizing machine translation for a purpose and domain.

As with all machine learning technologies, the right data will deliver better translation quality results. Language Studio includes the tools and technologies to process, gather, and synthesize the data needed for training. With sufficient in-domain data Neural MT is able to “think” more like the human brain when producing translations. Unlike SMT, this can be in excess of 50 million bilingual sentences as just the starting point. Omniscien provides much of this data, and mines and synthesizes millions of new bilingual sentences for each engine.

Behind many of the tools design is Omniscien’s Chief Scientist, Professor Philipp Koehn who leads our team of researchers and developers. Philipp is a pioneer in the machine translation space. His books on Statistical Machine Translation and Neural Machine Translation are the leading academic textbooks globally on machine translation. Both books are available now from Amazon.com or leading book stores.

Language Studio provides state-of-the-art neural machine translation tools

- 600+ Language Pairs

- Real-Time: 2,000 words per second

- State-of-the-art Deep Neural Machine Translation

- Document Level translation for higher accuracy and better context.

- Custom machine translation engines with advanced domain adaptation processing.

Check out our recent webinars about neural machine translation

Understand Terminology

What is Deep NMT?

Deep Neural Machine Translation is a modern technology based on Machine Learning and Artificial Intelligence (AI). Deep learning is a sub-field of Machine Learning which is inspired by the structure and functions of the human brain. Deep Neural Machine Translation (Deep NMT) is an extension of Neural Machine Translation (NMT).

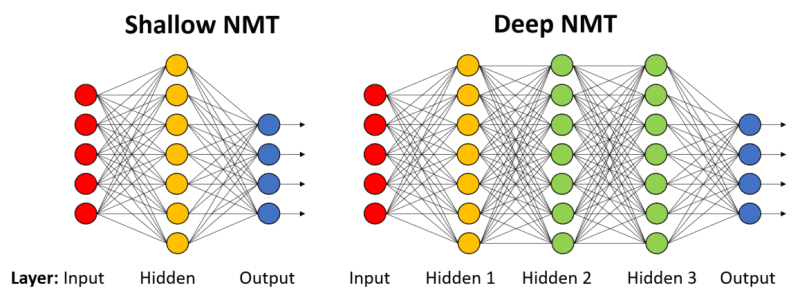

Unlike the Shallow NMT, Deep NMT processes multiple neural network layers instead of just one. As a result, we experienced the best machine translation quality ever produced before it. Both Shallow and Deep NMT use a large neural network with the difference that Deep NMT processes multiple neural network layers instead of just one. Deep encoders have been proven to be effective in improving neural machine translation systems.

Typically, Deep NMT has some inputs, outputs, and several hidden layers to perceive, process and deliver accordingly. In other words, deep learning is nothing but a group neural network algorithms which can imitate human learning style to some extent i.e. understanding patterns, recognize different persons, objects etc. Instead of neural electric signals, it uses numerical data converted from images, videos, texts, etc from the real world.

Early NMT systems were based on Shallow NMT, with fewer layers. As the technology advanced it became possible to process with more layers and further improve accuracy and translation quality.

Neural Machine Translation Technology

Language Studio utilizes the latest in state-of-the-art translation technologies. Our custom machine translation engines utilize by the Recurrent Neural Network technology and Google’s Transformer model technology.

Recurrent Neural Network (Miceli Barone et al. 2017)

Recurrent Neural Networks (RNNs) are a class of neural networks that allow previous outputs to be used as inputs while having hidden states. Deep Recurrent Neural Networks (RNNs) with Deep Transition Cells are one of the more advanced form of RNN and used by Omniscien as the basis for many of our custom NMT engines.

RNNs are a network of neuron-like nodes organized into successive layers. Each node in a given layer is connected with a directed (one-way) connection to every other node in the next successive layer. Each node (neuron) has a time-varying real-valued activation. Each connection (synapse) has a modifiable real-valued weight . Nodes are either input nodes (receiving data from outside of the network), output nodes (yielding results), or hidden nodes (that modify the data en route from input to output).

In a conventional neural network, each input is independent of other inputs. But, tasks involving sequential dependency cannot be solved assuming such independence. For example, If we want to predict the next word in a sentence, we better know which words came before it. Being said that, there comes the concept of recurrent neural networks where are they perform the same task for every element of a sequence, with the output being dependent on the previous computations or outputs.

In other words, RNNs have “memory” cells which gain information about what has been seen so far. Theoretically, RNNs can make use of information in arbitrarily long sequences, but in practice, they are limited to looking back only a few steps because of time and memory limitations. In terms of machine translation, more often we need to process long-term information which has to sequentially travel through all cells before getting to the present processing cell. This means it can be easily corrupted by being multiplied so many times by small numbers. This is the cause of vanishing gradients.

RNNs have difficulties learning long-range dependencies and interactions between words that are several steps apart. That’s problematic because the meaning of an English sentence is often determined by words that aren’t very close: “The man who wore a wig on his head went inside”. The sentence is really about a man going inside, not about the wig. But it’s unlikely that a plain RNN would be able to capture such information.

Google Transformer Model (Vaswani et al. 2017)

The Transformer architecture is superior to RNN-based models in computational efficiency. Transformer is one of the most promising structures and models, which can leverage the self-attention mechanism to capture the semantic dependency from the global view.

Transformer models are an advanced approach to machine translation using attention based layers. Transformer introduces a completely new type of architecture called self-attention layers. The model does not use convolutional or recurrent layers like the RNN approach. The model uses parallel attention layers whose outputs are concatenated and then fed to a feed-forward position-wise layer.

Transformer models reduce the number of sequential operations using a multi-head attention mechanism. Transformer models also eliminate the recurrence/convolution completely with attention and totally rely on self-attention based auto-regressive encoder-decoder, i.e. uses previously generated symbols as extra input while generating the next symbol.

Other Technologies and Techniques

- Multi-source models (Junczys-Dowmunt and Grundkiewicz, 2017)

- RNN and transformer-based language models

- Other features: layer normalization (Ba et al. 2016), tied embeddings (Press and Wolf, 2017), residual/skip connections

We have collected some insightful information links that provide a solid overview from the Omniscien team and several external research papers:

- Article: Stanford has a great Recurrent Neural Network Cheat Sheet

- Article: What is Transformer?

- Paper: A Survey of Deep Learning Techniques for Neural Machine Translation

- Paper: Deep Architectures for Neural Machine Translation

- Paper: Depth Growing for Neural Machine Translation

- Omniscien blog “Riding the Machine Translation Hype Cycle – From SMT to NMT to Deep NMT” by Dion Wiggins

- Omniscien Blog :“The State of Neural Machine Translation (NMT)” by Philipp Koehn

- Omniscien Webinars: “NMT webinar series for a comprehensive introduction to Neural Machine Translation.”

Related Links

Pages

- Introduction to Machine Translation at Omniscien

- Hybrid Neural and Statistical Machine Translation

- Custom Machine Translation Engines

- Powerful Tools for Data Creation, Preparation, and Analysis

- Clean Data Machine Translation

- Industry Domains

- Ways to Translate

- Supported Languages

- Supported Document Formats

- Deployment Models

- Data Security & Privacy

- Secure by Design

- Localization Glossary - Terms that you should know

Products

- Language Studio

Enterprise-class private and secure machine translation. - Media Studio

Media and Subtitle Translation, Project Management and Data Processing - Workflow Studio

Enterprise-class private and secure machine translation.

FAQ

- FAQ Home Page

- Primer; Different Types of Machine Translation

- What is Custom Machine Translation?

- What is Generic Machine Translation?

- What is Do-It-Yourself (DIY) Machine Translation?

- What is Hybrid Machine Translation?

- What is Neural Machine Translation?

- What is Statistical Machine Translation?

- What is Rules-Based Machine Translation?

- What is Syntax-Based Machine Translation?

- What is the difference between “Clean Data MT” and “Dirty Data MT”?