As the localization industry and the translation industry have grown, so too has the number of terms and industry jargon used by professionals. We’re so enthusiastic about the localization industry that we often get carried away and forget that words can be intimidating to beginners.

While this may not be the only localization glossary that you ever need. We have tried to make it as comprehensive as possible. If you have any questions about terminology or you would like to suggest some terms to be added, please contact us.

We’ve created a comprehensive glossary of more than 140 basic localization terms to help you get up to speed with some of the more common localization terminology – and avoid looking like a novice at meetings.

Glossary Terms

101% Matching

One hundred and one percent matching is a technical term used in translation memory software to describe the amount of matching between the text of two strings. If a sentence matches a copy in memory and its context matches as well, this is considered 101% matching.

Adaptive MT / Adaptive Machine Translation

Adaptive machine translation customises/improves/adapts a machine translation engine while the human post-editor fixes the machine translation output, instead of after batch retraining. Adaptive machine translation is an example of online machine learning and human-in-the-loop (HITL).

Agile Localization

Agile localization is a set of tools and techniques used to merge localizing and translating into an agile product development cycle. This enables your team to work simultaneously on the same version of your product, while still maintaining translation quality through controlled translation approaches and QA processes.

AI

Application Programming Interface

An Application Programming Interface (API), also known as an Application Program Interface, is a set of definitions and protocols that enable two software components to communicate with each other. Application Programming Interfaces give developers and organizations the ability to connect just about anything to just about anything else. For example, the weather bureau’s API allows the weather app on your phone to get daily updates of weather data from their system.

Language Studio has a comprehensive set of APIs that enable you to integrate the translation, transcription, Natural Language Processing, Optical Character Recognition (OCR), file format conversion and other features directly into your own localization workflows.

Artificial Intelligence (AI)

Artificial intelligence is a broad field of computer science. It consists of the study and design of intelligent agents, whether they are software applications, robots or something else. The basic problem can be stated in this way: “Given a certain situation, find the most appropriate action.” Although there is no universally accepted definition of artificial intelligence, most would agree that it includes the ability to see patterns in information and solve problems using those patterns to continue learning.

Artificial intelligence is a field of study that focuses on building systems and computers that are capable of performing tasks normally reserved for humans, such as language processing and image recognition. Although there are many definitions and approaches to what AI can be, artificial intelligence is often referred to as non-specific programming – or simply just software.

Artificial intelligence requires a high degree of critical thinking and creativity. It uses computers to mimic human behavior. For example, a program that can translate languages is an example of artificial intelligence. Deep learning is a sub-field of artificial intelligence that involves using multiple layers of neural networks stacked on top of each other.

Automated Quality Estimation

Back Translation / Reverse Translation

Back translation is a quality assurance process in which a document that’s been translated from one language is re-translated back into the original language to ensure that the meaning is accurate. It’s also used as a form of quality control to identify errors, ambiguities and cross-cultural misunderstandings in your sourced content before publishing.

Baseline Translation

A baseline translation is a reference translation that serves as a benchmark for the performance of other machine translation systems. It is typically a simple machine translation system that serves as the starting point for more complex and sophisticated machine translation systems.

Baseline translations are used to evaluate the performance of machine translation systems by comparing the output of the systems to the reference translation. This can be done using a variety of metrics, such as translation error rate (TER), BLEU score, or human evaluation.

Baseline translations are typically created using rule-based or statistical machine translation systems, which are simpler and less accurate than more advanced machine translation systems such as neural machine translation systems. As such, they often produce translations that are less accurate and less fluent than those produced by more advanced systems.

Baseline translations are often used in the development and evaluation of new machine translation systems, as a way to measure the progress and improvement of the systems over time. They can also be used to evaluate the performance of different machine translation systems on a given dataset, or to compare the performance of different translation techniques or approaches.

BLEU Score

BLEU (Bilingual Evaluation Understudy) is a measure of the quality of machine translation. It compares the quality of a candidate translation to that of one or more reference translations. The score increases if the candidate is closer to the reference, or decreases if it is farther away.

See paper – “BLEU: a Method for Automatic Evaluation of Machine Translation” published by IBM T.J. Watson Research Center in 2022.

Bitext

A bitext is a collection (usually electronic) of texts in two languages that can be considered translations of each other and that are aligned at the sentence or paragraph level. It is one of the most basic results of translation, and can be used in the language industry for training, revision, quality control, and machine translation.

Central Processing Unit

A central processing unit, also called a central processor, main processor or just processor, is the electronic circuitry that executes instructions comprising a computer program. The CPU performs basic arithmetic, logic, controlling, and input/output operations specified by the instructions in the program.

CLDR

Collaborative Translation

Collaborative translation is when a group of people with different skills and expertise work together on a translation project in a collaborative online workspace. The goal is to improve quality and cut total translation time by distributing various translation tasks across the group.

Common Locale Data Repository

The Common Locale Data Repository (CLDR) is a collaborative effort made by the Unicode Consortium, and intended to be used in projects that need a common base of data for collating, sorting and formatting. While the technical database itself is not directly accessible, it can be used as a library of locale-specific data in order to provide localized services.

The Common Locale Data Repository (CLDR) project is one of the best open source standard libraries for localizing an application. CLDR contains information about past and current locales, currencies, languages and transliteration schemes as well as data files that can be used as the source of translation between language codes, language names and their native spellings.

Compliance Regulations

Compliance regulations are laws, rules, and standards that organizations must follow in order to operate legally and ethically. These regulations may be industry-specific or may apply to all organizations in a particular jurisdiction. They are often put in place to protect the interests of consumers, employees, shareholders, and other stakeholders, and to ensure that organizations are transparent and accountable in their operations.

Compliance regulations may cover a wide range of areas, including financial reporting, privacy, data protection, cybersecurity, health and safety, and environmental protection. Some examples of compliance regulations include:

- The Sarbanes-Oxley Act (SOX), which establishes standards for financial reporting and internal controls for public companies in the United States

- The General Data Protection Regulation (GDPR), which regulates the collection, use, and protection of personal data in the European Union

- The Health Insurance Portability and Accountability Act (HIPAA), which sets standards for the protection of personal health information in the United States

- The Occupational Safety and Health Act (OSHA), which establishes safety and health standards for the workplace in the United States

Organizations that fail to comply with relevant compliance regulations may face fines, legal action, and damage to their reputation. As such, compliance is an important consideration for organizations of all sizes and industries, and compliance programs and processes are often put in place to ensure that an organization is meeting its regulatory obligations.

Computer Assisted Translation / Computer Aided Translation (CAT)

Computer-Assisted Translation (CAT) is the process of translating text using software. The software can either be employed in different phases of translation or serve a variety of purposes throughout the process. CAT tools typically include translation memory, concordance and post-production features

Concordance Search

Concordance searching is a way to find words or phrases in a translation memory that have previously been translated into your desired language. This can help translators produce more accurate results, and new translations more quickly than if they were relying solely on contextual advice.

Confidence Scores

Confidence scores play a crucial role in the realm of automated translation, as they help direct human translators to areas where they should concentrate their efforts and attention. Machine Translation (MT) confidence scores are typically integrated into the data and displayed within the Translation Management System (TMS) editor. These scores serve as a valuable metric for gauging machine translation accuracy and are an essential component of the translation quality assurance process.

Although confidence scores share similarities with Machine Translated Quality Estimation (MTQE), they are not identical. MTQE can be utilized both before and after the translation process to assess the quality of machine-generated translations. Both confidence scores and MTQE are instrumental in determining whether the translated strings between source and target languages are of high quality and accurately represent the intended meaning.

Key aspects of confidence scores and their role in automated translation include:

- Guiding Human Translators: By providing confidence scores, automated translation systems help human translators identify areas that require greater focus and improvement, leading to more efficient use of their time and resources.

- Accuracy Metrics: Confidence scores offer a general indication of machine translation accuracy, enabling organizations to assess the effectiveness of their automated translation systems and make informed decisions about translation quality.

- Quality Assurance: Incorporating confidence scores into the translation quality assurance process helps ensure that the final translations meet the desired quality standards and accurately convey the intended meaning.

- Comparison with MTQE: While both confidence scores and MTQE are involved in assessing translation quality, MTQE can be applied at various stages of the translation process and offers a more comprehensive evaluation of machine-generated translations.

- Enhancing Translation Workflow: Confidence scores and MTQE together contribute to streamlining the translation workflow by identifying areas that require attention and improvement, ultimately resulting in better quality translations and more efficient use of resources.

Content Management System

Content management systems (CMS) are a popular way for small businesses to manage their websites. They help amateur webmasters use templates to create a professional feel to their pages and quickly update them, without requiring extensive knowledge of HTML. These platforms also allow you to manage your emails and build landing pages for your product or service through email campaigns, without investing in any additional website development work.

Continuous Localization

Continuous Localization is a specialized approach within the broader domain of agile localization. It is characterized by the integration of localization processes into the product development lifecycle in a seamless and automated manner. This method streamlines the workflow and facilitates the simultaneous progression of localization and product development.

By removing manual processes such as uploading strings, tracking changes, and managing version control, continuous localization allows teams to save time and increase overall efficiency. Moreover, it helps in maintaining a higher quality standard for localized content.

Key aspects of continuous localization include:

- Integration with the development lifecycle: Continuous localization ensures that localization tasks are performed in parallel with product development, allowing for a more efficient workflow and quicker adaptation to changes in the source content.

- Automation: By automating processes like string uploads, change tracking, and version control, continuous localization reduces manual intervention, mitigating the risk of errors and improving the overall quality of localized content.

- Flexibility: Continuous localization is designed to adapt to the dynamic nature of agile development processes. This approach enables localization teams to accommodate frequent updates and modifications in the source content without disrupting the ongoing product development.

- Scalability: The automated and integrated nature of continuous localization allows it to scale with the growth of the product and the expansion of target markets. As a result, it can efficiently handle increased localization demands while maintaining high-quality standards.

- Quality improvement: By aligning localization with the product development lifecycle and leveraging automation, continuous localization helps teams consistently deliver high-quality localized content that meets the needs of diverse audiences.

Controlled Language

Controlled Language refers to a specialized form of language that aims to facilitate clear and straightforward communication for a specific purpose. It is particularly useful when creating content intended for machine translation or for diverse global audiences. The term “Controlled Language” encompasses a variety of approaches and initiatives, such as Plain Language, Simplified Technical English, Caterpillar Fundamental English, among others.

The primary objective of effective controlled language initiatives is to select the most uncomplicated terms that accurately convey the intended meaning. To achieve this, these initiatives implement certain restrictions on various language aspects, including grammar, syntax, and verb forms. By simplifying the language and adhering to these guidelines, controlled language initiatives help ensure that the content is easily understood by a wide range of readers, including those with limited language proficiency or who are using machine translation tools.

Some key features of controlled language initiatives include:

- Vocabulary: Controlled language initiatives typically employ a limited and standardized set of words, focusing on selecting the most basic and easily understandable terms.

- Grammar: To make the content more accessible, controlled language initiatives often impose strict rules on grammar usage, simplifying sentence structures and reducing ambiguity.

- Syntax: By streamlining syntax, controlled language initiatives promote clarity and precision in communication, minimizing the likelihood of misinterpretation.

- Verb forms: Controlled language initiatives may restrict the use of certain verb forms, such as passive voice or complex tenses, in favor of simpler and more direct alternatives.

Through these measures, controlled language initiatives contribute to the creation of clear, concise, and easily translatable content, making it more accessible to a global audience and facilitating communication across language barriers.

CPU

Custom MT / Custom MT Engine

Dedicated MT Engine / Dedicated Machine Translation Engine

A Dedicated MT Engine is an engine that is always loaded and ready to translate. This is the opposite to a Load-On-Demand MT Engine, which is only loaded into memory and consuming compute resources when needed.

A Dedicated Machine Translation (MT) Engine refers to a translation engine that remains constantly loaded and prepared to perform translations at any given time. In contrast, a Load-On-Demand MT Engine is designed to be loaded into memory and utilize computing resources only when it is required for translation tasks.

The key differences between Dedicated and Load-On-Demand MT Engines can be summarized as follows:

- Availability: A Dedicated MT Engine is always available and primed for translation tasks, ensuring faster response times for translation requests. Conversely, a Load-On-Demand MT Engine is activated only when needed, which may result in a slight delay before it is ready to process translations.

- Resource Consumption: Since a Dedicated MT Engine is constantly loaded, it consumes computing resources continuously, even during periods of inactivity. On the other hand, a Load-On-Demand MT Engine optimizes resource usage by consuming computing resources solely when a translation task is at hand, leading to more efficient resource allocation.

- Cost Implications: Maintaining a Dedicated MT Engine may entail higher costs due to the continuous consumption of computing resources. In contrast, a Load-On-Demand MT Engine can help control costs by consuming resources only when required for translations, making it a more budget-friendly option for organizations with fluctuating translation demands.

- Use Cases: A Dedicated MT Engine is well-suited for scenarios where translation services are in constant demand, necessitating a readily available translation engine. In contrast, a Load-On-Demand MT Engine is a better fit for situations where translation needs are sporadic or unpredictable, as it can be activated and deactivated based on the actual demand for translation services.

Deep Learning

Deep learning is a subfield of machine learning that is inspired by the structure and function of the brain, specifically the neural networks that make up the brain. It involves training artificial neural networks on a large dataset, allowing the network to learn and make intelligent decisions on its own.

Deep learning algorithms use multiple layers of artificial neural networks to learn and make decisions. Each layer processes the input data and passes it on to the next layer, until the final layer produces the output. As the data passes through the layers, the network learns to recognize patterns and features in the data, allowing it to make more accurate predictions or decisions.

Deep learning has been successful in a wide range of applications, including image and speech recognition, natural language processing, and even playing games like chess and Go. It has also been used in healthcare, finance, and other industries to analyze large datasets and make predictions or decisions based on that data.

Deep Neural Machine Translation (DNMT / Deep NMT)

Deep Neural Machine Translation is a new technology based on Machine Learning and Artificial Intelligence (AI). It is an extension of Neural Machine Translation (NMT). Both use a large neural network with the difference that Deep NMT processes multiple neural network layers instead of just one.

Direct Translation / Direct Language Pairs

Direct language pairs translate directly from one language to another (i.e. German – French). This technique is used by many translation tools such as Google Translate, Microsoft Translate and DeepL for more common language pairs. Less common language pairs often use pivoting (pivot language pairs) between a language such as English. Omniscien tools translate directly between 600+ language pair combinations, in addition to pivoting for lower resource language pairs.

DTP

Do-Not-Translate (DNT)

Do Not Translate (DNT) is used when you want to ensure that your text is never translated. For example, DNT can be used for brand names, trademarks, and other important phrases that should remain in their source language.

Document Alignment

Document Alignment, also known as document pairing, is the process of automatically matching pairs of translated documents to each other. The input is a set of previous translations that were created by professional human translators. Artificial Intelligence is used to automatically determine pairs of localized documents that are translations of each other. Paired documents can then be processed with automated Bilingual Sentence Matching to create bilingual sentences.

There are often tens of thousands or even hundreds of thousands of documents that may or may not be translations of each other. In some cases, even millions. Frequently, it is impossible to determine manually which documents are translations due to the large number of documents. This process is often used when crawling web sites and matching web based content.

Language Studio provides advanced AI-powered tools where you can upload thousands of documents in different languages and formats. Artificial Intelligence is used to automatically determine pairs of documents that are translations of each other. Paired documents can then be processed with automated Bilingual Sentence Matching to create bilingual sentences.

Document-Level Machine Translation

Document-level machine translation refers to the process of translating an entire document or large chunks of a document, rather than just individual sentences or phrases. This type of translation is often used for longer documents such as subtitles, reports, articles, and books, as it can provide a more cohesive and coherent translation of the entire document.

In document-level machine translation, the translation system takes into account the context and structure of the entire document, rather than just the individual sentences. This can help to improve the overall quality of the translation, as the system is able to better understand the relationships between different parts of the document and how they fit together.

Document-level machine translation is a type of machine translation that focuses on translating an entire document, rather than individual sentences or phrases. This type of translation is often used for business documents, legal documents, and other types of documents that require a high level of accuracy and consistency.

To perform document-level machine translation, the machine translation system typically analyzes the entire document to understand its context and structure, and then uses this information to produce a translation that is accurate and faithful to the original document. This process can be aided by the use of machine translation memories, glossaries, and other resources that help the system to produce more accurate translations.

Document-level machine translation is often preferred over sentence-level machine translation for important documents because it can produce translations that are more accurate and consistent, and that better capture the intended meaning of the original document. However, it can also be more time-consuming and resource-intensive, as it requires the system to analyze the entire document rather than just individual sentences or phrases.

Language Studio fully supports Document Level Machine Translation

Domain Adaptation

Domain adaptation has become a topic of critical importance in machine translation. The models used to translate out-of-domain text struggle to accurately translate when they are faced with linguistic concepts and idioms that differ greatly from those that they were trained on.

Domain adaptation is a challenging task for every machine translation model. It makes sense for the models to have large amounts of data available for training, but domain adaptation is still an issue because the models are trained using data from one domain and later translated into another.

Language Studio provides an extensive set of tools specifically built for rapid domain adaptation. Competitors use a rather basic approach of uploading your own translation memories such as those exported from a Translation Management System and adding to the existing file translation memories. However, that approach does not deliver any level of control. It has earned itself the label “Upload and Pray”.

The Language Studio approach creates between 20-40 million high quality translation segments that are specific to the use case of the end user.

DNT

See Do-Not-Translate

DNMT

Desktop Publishing (DTP)

Desktop Publishing (DTP) is the process of localizing documents in the target language in the original design. This is done by a design specialist and is necessary when the source text is part of a layout, to ensure that the text still looks as it should once it has been translated into a different language. This is necessary when the source text is part of a layout and it may change when translated, because text length or direction may change.

Edit Distance

Edit Distance, also known as Levenshtein Distance, is a measure of the similarity between two strings. It is defined as the minimum number of single-character edits (insertions, deletions, or substitutions) required to transform one string into another.

For example, the Edit Distance between the strings “kitten” and “sitting” is 3, because the following three edits are required to transform one into the other:

- kitten -> sitten (substitute “s” for “k”)

- sitten -> sittin (substitute “i” for “e”)

- sittin -> sitting (insert “g”)

Edit Distance is a useful measure of the similarity between strings, and is often used in natural language processing and information retrieval to compare and evaluate the similarity of words, phrases, and documents. It can also be used in spell checkers and other applications where the ability to detect and correct errors in a string is important.

There are various algorithms for calculating the Edit Distance between two strings, including the dynamic programming algorithm and the iterative algorithm. The time and space complexity of these algorithms can vary, depending on the length and complexity of the strings being compared.

F-Measure / F1 Score

F-measure, also known as the F1 score, is a measure of a model’s accuracy that takes into account both the precision and the recall of the model. It is commonly used in the field of natural language processing and information retrieval to evaluate the performance of machine learning models.

Precision refers to the proportion of correct predictions made by the model, while recall refers to the proportion of correct predictions out of all the possible correct predictions. The F1 score is the harmonic mean of precision and recall, and is calculated as follows:

F1 = 2 * (precision * recall) / (precision + recall)

The F1 score is a useful measure of a model’s accuracy because it considers both the precision and the recall of the model. A model with high precision but low recall will have a low F1 score, while a model with high recall but low precision will also have a low F1 score. On the other hand, a model with both high precision and high recall will have a high F1 score.

The F1 score can be used to compare the performance of different models on the same dataset, or to compare the performance of a single model on different datasets. It is a widely used metric in the field of natural language processing and information retrieval, and is often used to evaluate the performance of machine learning models on tasks such as text classification and information retrieval.

FIGS, EFIGS, FIGSDRP, CJK, LATAM

These combinations of letters are an abbreviation for a set of languages, usually the languages a game is localized into or published in. Specifically they are as follows:

- FIGS: French, Italian, German, Spanish

- EFIGS: English, French, Italian, German, Spanish

- FIGSDRP: French, Italian, German, Spanish, Dutch, Russian, Portuguese

- CJK: Chinese, Japanese, Korean

- LATAM: LATAM is short for Latin America and refers to Spanish and Brazilian Portuguese

Fuzzy Match

Fuzzy matching is a technique used by translation memory tools to prioritize matches based on forms of words instead of exact matches. This feature allows you to find better matches without having to pay for a more strict-matching tool, and also helps save time when translating different types of content.

G11N

See Globalization

GDPR

The General Data Protection Regulation (GDPR) is a data protection and privacy law that was adopted by the European Union (EU) in 2016. It replaces the 1995 EU Data Protection Directive and applies to all organizations that process the personal data of individuals in the EU, regardless of the organization’s location.

The GDPR sets out a number of principles and requirements for the collection, use, and protection of personal data. It requires organizations to be transparent about how they collect, use, and share personal data, and to only process personal data for specified and explicit purposes. It also gives individuals certain rights with regard to their personal data, such as the right to access, rectify, erase, or restrict the processing of their data.

The GDPR applies to a wide range of personal data, including names, addresses, email addresses, IP addresses, and other identifying information. It applies to both electronic and paper records, and to data processed both manually and automatically.

Organizations that fail to comply with the GDPR may be subject to fines of up to 4% of their annual global turnover or €20 million (whichever is greater). As such, the GDPR is an important consideration for organizations that process the personal data of individuals in the EU.

Generic Machine Translation

When a machine translation system has not been customized and is not specialized in a specific domain, this is commonly referred to as “Generic” machine translation. Google Translate, Microsoft Translator and other free public translation systems fall into this category. Generic machine translation can often be useful for getting a general meaning of a piece of text, but is prone to grammar, syntax and other errors.

Google Translate and Microsoft Translator are designed to translate anything, anytime, for any purpose, so as to provide an understanding. This has many very valuable uses. However, there are many causes where the quality is insufficient. Additionally, there are many significant data privacy, security, and complaince issues.

See the Omniscien page on Data Privacy, Securty and Compliance.

See the Omniscien FAQ – What is generic Machine Translation?

GILT

GILT is an acronym that stands for Globalization, Internationalization, Localization, and Translation.

Globalization

The term Globalization refers to the development of a global market, whereby globalization is the integration of financial markets and economic development. A systematic approach to international business operations, global marketing, and management can result in an increase in corporate profits or foster new markets for innovation, research, development and growth.

Glossary

A glossary is a list of terms and definitions used for a specific localization project. This glossary defines terms that are specific to the website’s target audience, such as vocabulary related to the product or industry. It explains what the terms mean, how to translate them, and whether to translate them at all (as some brand and feature names may be DNT).

Graphical User Interface

A Graphical User Interface (GUI), also sometimes referred to as the “user interface” or simply the “interface,” is a form of interaction through which users interface with a computer or device. A GUI uses icons, menus and other features to display information and user controls. It allows computer users to interact with electronic devices through visual indicators or graphics rather than traditional command line tools.

Graphics Processing Unit

A graphics processing unit is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, mobile phones, personal computers, workstations, and game consoles. In recent years, GPUs have been heavily used for Machine Learning tasks. One such task is the training of Neural Machine Translation engines.

GPU

GUI

High Quality Translation

A high-quality translation output should be comprehensible and targeted to a specific audience. Translations that follow all grammatical conventions, adhere to rules for line breaks, punctuation, alignment and capitalization are easier to read – resulting in improved understanding of an original message. This is the basic fundamentals of high-quality translation. However, every use case has different requirements and there are tradeoffs between translation quality, speed of translation, time and effort to translate, amount of human review and post-editing, and the cost or budget for the tasks.

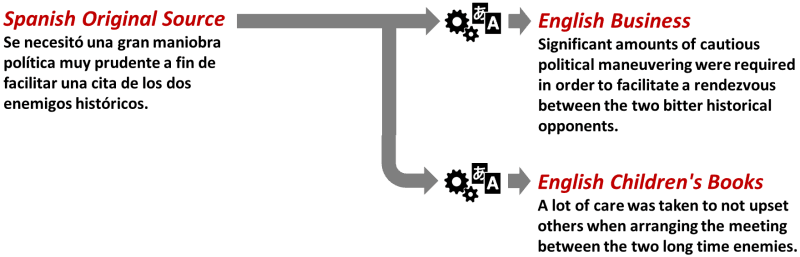

In many cases, a high quality translation should also be specifically adapted for a target audience, writing style, industry domain and purpose. A body of text may be translated perfectly, but totally unsuitable for the target audience. The graphic below shows an example of Business style (i.e., Forbes, Economist), and a second example of Children’s Books (i.e., Harry Potter). The Business style would be totally unsuitable for childrens books.

HITL

Human-In-The-Loop

Human-in-the-loop (HITL) is a branch of artificial intelligence that leverages both human and machine intelligence to create machine learning models. In a traditional human-in-the-loop approach, people are involved in training, tuning, and testing a particular algorithm.

Human-in-the-loop approaches allow to solve several important problems in machine learning: Allowing humans to incorporate their subject matter expertise while using AI; Recognizing that AI is an integral component of human decision making, not something separate.

I18n

Short for Internationalization. “I” is followed by 18 letters ending with “N”.

In-Context Translation

In-context translation enables translators to better understand the meanings of words, phrases and sentences in their context by showing the source text alongside context elements such as the background image or product screenshots.

Industry Domain

Machine translation quality is improved by better understanding of context. Industry Domains help with setting the appropriate context and bias within a translation. Unlike humans, machines cannot understand context (yet). The simple phrase “I caught a virus” could refer to your personal health or to your computer. Context can change the meaning to something totally different. Industry domains provide the context needed for higher quality translations.

There is no standardized term for Industry Domains. Different machine translation vendors and tools refer to them using an insanely wide variety of synonyms. The most common are Stock Engines, Stock Models, and Industry Domains. You may also hear Industry Domains referred to as the following synonyms:

- Industry Domains, Industry Domain MT Engines, Industry Domain Machine Translation Engines, Industry MT Domains, Industry Machine Translation Domains, Industry Engines, Industry MT Engines, Industry Machine Translation Engines, Industry MT Engines, Industry Machine Translation Engines

- Stock Engines, Stock MT Engines, Stock Machine Translation Engines, Stock Models, Stock MT Models, Stock Machine Translation Models

- Stock Corpora, Stock Corpus, Stock Training Corpora, Stock Training Corpus, Stock Data, Stock Training Data, Industry Corpora, Industry Domain Corpora, Industry Domain Training Data, Industry Domain Data, Industry Corpus, Industry Domain Corpus

- Industry Stock Engines, Industry Stock MT Engines, Industry Stock Machine Translation Engines

- Baseline Engines, Baseline MT Engines, Baseline Machine Translation Engines, Baseline Models, Baseline MT Models, Baseline Machine Translation Models

Internationalization (I18n)

Internationalization is the practice of designing products, services and internal operations to facilitate expansion into international markets.The process of designing a product or content to ensure that it can be localized into various regions and countries with minimal efforts.

Interpreting

Interpreting is the live human translation of spoken material, which is done at events or in a conference setting. Interpreters convert spoken words into another language while they are being said in real-time.

Interpreters work as translators by interpreting speeches and conversations in conferences, business meetings, negotiations or for any official event, as well as private conversations between individuals who speak different languages. If a translator’s skills aren’t up to par, he/she fails his/her audience. It is important for a person requiring an interpreter for any reason to ensure that they receive someone with the proper qualifications.

L10n

Short for Localization. “L” followed by 10 letters ending with “N”.

Language Code

Language codes are alphabetic or numeric codes assigned to languages by ISO and ECMA International. They are defined in ISO 639, which defines the two-letter abbreviations of language names, both final and initial. The three-letter abbreviations are defined in ISO 639-2.

Language Identification / Language ID

Language identification or language guessing is the problem of determining which natural language given content is in. Natural language processing systems often use it to detect the predominant language in their input, or to guess what language a user’s input text is, for example for multilingual content retrieval or machine translation systems.

Language Studio includes advanced automated Languge ID processing tools, including dominant language in a document and sentence by sentence langauge identification.

Langauge Pair / Language Combination

A language pair refers to the two different languages in which a translator or interpreter can provide translation services. Language pairs include English/Spanish or English/Japanese, for example. A language pair is not always restricted to two languages; a translator or interpreter may be able to translate between three different languages such as English/Spanish/French.

Language Service Provider / Localization Service Provider (LSP)

Language service providers (LSPs) are local, freelance or global companies that provide translation, localization and interpretation. Services can be provided for various purposes, such as technology, marketing, sports and translation of legal documents. Language services can include anything from legal translations to website content to help new medical systems integrate into a hospital.

Levenshtein Distance

See Edit Distance

Linguistic Quality Assessment (LQA)

Linguistic Quality Assessment (LQA) is a process of evaluating the translation quality. This is done by identifying areas for improvement then implementing the necessary changes. During this process, the reviewer will check the translated documents to find errors and fill them in an LQA form in the suitable error categories and error severity.

Literal Translation

A literal translation is a translation in which a word-for-word rendering of the source language text is rendered in the target language. This type of translation, though it provides a good understanding of what the source language means and may be understood, has severe problems with inflection, context and quality.

Load-On-Demand MT Engine / Load-On-Demand Machine Translation Engine

A Load-On-Demand MT Engine is loaded only when needed. When processing is complete, it is unloaded again to release resources for other processes. With Language Studio V6, an MT engine will typically load in 2-5 seconds. This is the opposite to a Dedicate MT Engine which stays loaded and consumes compute resorces even when it is not in use.

Loanword

Loanwords are words taken from one language and used in another without translation. Loanwords are sometimes written with a hyphen between the origin language, but this is not always the case. Examples of loanwords used in English include bizarre and hors d’oeuvre (from French), nacho (from Spanish), and soymilk (from Japanese).

Locale

Locale is a term used to describe the combination of a language and specific geographic region (usually a country). The linguistic and cultural adaptation of apps and services for users in specific regions is called localisation.

Localization (L10n)

Localization is the adaptation of a particular product or service to one or more international markets (See Internationalization). A localized version may use different language text and cultural references; different currencies, regulations, or conventions; and may include specific segments or audiences within the market.

Localization Software

Localization software (also called translation management software) aims to minimize manual and repetitive localization work for software development teams. By putting a single source of translated content into the hands of multiple translators, localization tools empower developers by simplifying the translation process, reducing translation time and costs, improving translation quality, and increasing developer efficiency.

Localization Testing

Localization testing is the process of verifying and validating the localization done for a software or mobile application product .It is also known as internationalization testing as it helps to ensure that the software/application can be used successfully in various markets.

Localizability

Localizability is the degree to which a program can be translated. For example, if all the strings in a program are fixed strings and cannot be changed, that would be an example of localizability problems.

LocKit / Lockit

A localization kit, or “Lockit”, is a bundle of files that are provided to the translator so they can proceed with localization. This can include any number of things, the most important being the source file or files that need translating or tweaking. Ideally, the lockit will also include various reference files such as a glossary or termbase, a translation memory, a style guide and character sheet (with info on the background or personalities of the characters in a game).

LSP

Machine Learning

Machine learning is a method of teaching computers to learn and make decisions on their own, without being explicitly programmed. It involves training a computer system using a large dataset, allowing the system to learn from the data and make intelligent decisions or predictions based on that learning. Machine learning, like the human brain, can learn to adapt flexibly and efficiently to unfamiliar tasks. The term covers many areas of computer science, such as classification (where we must identify an output or classifying an input vector), regression (which predicts a continuous outcome such as age or weight based on an input vector) and clustering (grouping similar examples together).

In the context of Localization, Machine Learning is often used to train custom machine translation or voice recognition models. Some of the most significant advances in AI over the past decade have come through pushing the limits of machine learning. Training a machine to learn from large amounts of data can lead to models that outperform humans at tasks like object detection and text translation.

There are several different types of machine learning, including supervised learning, unsupervised learning, self-supervised learning, semi-supervised learning, and reinforcement learning.

In supervised learning, the algorithm is trained on a labeled dataset, where the correct output is provided for each example in the training set. The goal is for the algorithm to make predictions on new, unseen examples that are drawn from the same distribution as the training set.

In unsupervised learning, the algorithm is not given any labeled examples. Instead, it must find patterns and relationships in the data on its own. One common application of unsupervised learning is clustering, where the algorithm groups similar examples together.

In self-supervised learning is a type of machine learning where the training data is automatically labeled by the learning algorithm itself, rather than being labeled by a human. In other words, the algorithm generates its own supervision signal from the input data, allowing it to learn without the need for human-provided labels.

Semi-supervised learning is a combination of supervised and unsupervised learning, where the algorithm is given a small amount of labeled data and a large amount of unlabeled data.

Reinforcement learning is a type of machine learning where an agent learns by interacting with its environment and receiving rewards or punishments for its actions.

Machine learning has been applied to a wide range of problems, including image and speech recognition, natural language processing, and even playing games like chess and Go. It has also been used in industries such as healthcare, finance, and marketing to analyze large datasets and make predictions or decisions based on that data.

Machine Translation (MT)

Machine translation (MT) is a software-based process that translates content from one language to another without human intervention. The technology behind MT is constantly evolving and will allow users to interact with their target audience in their native language.

MT enables greater accessibility to content across languages and cultures by increasing the reach of websites, mobile apps, and other software tools, or making it possible for customers and readers to communicate with each other in their own languages without the need of human translators.

Machine translation is also used heavily by professional translators and Language Service Providers as a way to create a first draft that is then usually Post-Edited by a human to perfection. As MT has matured and evolved, modern Neural Machine Translation requires even less editing and in many cases can be perfect. However, for publication purposes, it is recommended to have a human in the process to ensure translation quality is at the appropriate level.

Machine Translation Software

Machine Translation Software is a specialized set of algorithms that automatically translate source language content into a target language.

Language Studio is one of the most powerful, most accurate, and fastest Machine Translation Software products on the market. It includes a set of Powerful Tools for Data Creation, Preparation, and Analysis that greatly expand the Machine Translation Customization process and it’s capabilities.

Machine Translation Customization / MT Customization

Machine Translation Customization is the adaptation of a machine translation system to be specialized towards a specific domain or topic. Customized Machine Translation will generate translations that are more precise and accurate to your needs. More accurate translations are created by adding your own Translation Memories or other relevant data.

Modern Neural Machine Translation Systems can incorporate multiple source languages and multiple target languages. This can enhance a specific language pair or be used as a multi-language machine translation engine to be used for many languages. In some cases, engines have been translated with more than 100 languages embedded within the engine.

Language Studio Custom Machine Translation Engines provide highly accurate translations that are domain and context specific.

Marketing Translation

Marketing translation is the process of adapting marketing materials into local languages and cultures for global use. By adapting your company’s positioning, voice and tone to resonate with audiences across cultural boundaries, you can more effectively communicate the benefits of your products and services to potential consumers. Marketing translation ensures that the company’s brand message is consistent across all marketing materials including websites, collateral, product specifications, brochures and packaging.

Markup Language

A markup language is a set of codes or symbols that are used to add formatting and structure to text documents. Markup languages are used to create and describe the structure of text documents, such as web pages, and to specify how they should be displayed or formatted.

Markup language refers to the different web languages and tags that specify the formatting, layout, and style of website content. When translating markup language, the content inside the tags is translated. The phrase “b” translates to bold in this example: this phrase is bold. For machine translation, the markup language is usually removed, translated, and then the markup is reinserted at the new location based on word order movements.

The most common markup language is HTML (HyperText Markup Language), which is used to create the structure and content of web pages. HTML uses a set of tags to define the structure of a web page, including headings, paragraphs, lists, and links.

Other markup languages include XML (Extensible Markup Language), which is used to describe and structure data, and LaTeX, which is used for formatting and typesetting documents in the fields of science and technology.

Markup languages are different from programming languages, which are used to write code that can be executed by a computer to perform a specific task. Markup languages are used to describe and structure the content of a document, rather than to specify a sequence of actions for the computer to perform.

Matches and Repetitions

Each time a new document is submitted for translation, each line of text is analyzed against the on-file translation memories. This graphical user interface of the Translation Management System will highlight matches and repetitions in the document so you can easily identify them for further action. From an efficiency standpoint, exact matches provide the highest amount of cost savings because the text already has an approved translation. However, if these matches are not exact they may need to be modified by a human translator before being added to the batch. Repetitions are phrases or sentences that are repeated continuously throughout a document and are usually discounted as 100% matches.

Metadata

Metadata is “data that provides information about other data”, but not the content of the data, such as the text of a message or the image itself. Metadata provides additional context about items and objects. It’s used to structure, semantically define, and target content. Metadata makes it easier for machines to understand and manipulate data by enabling programs to find information by subject matter or nature instead of just using keywords. Metadata extends the capabilities of content, making it more powerful and enabling efficient operation in a data-driven world. Metadata is essential for effective management and operation of digital assets throughout their life cycle.

ML

See Machine Learning

MLV

Mother Tongue

Mother tongue refers to any language that is the first language a person learns from his/her parents in childhood. Whether an individual speaks one, few or several languages, each language can be classified as their mother tongue, depending on where they learned it (home vs school). It is possible for one to have multiple mother tongues if they were raised in a bi-lingual household or country or had extensive lifetime education in a second language.

MT

MT Quality Estimation / Machine Translation Quality Estimation (MTQE)

MTQE is an AI-based feature that provides segment-level quality estimations for machine translation (MT) suggestions. Similar to the quality estimations for translation memory (TM) matches and non-translatables (NT), MTQE helps you determine if alternative suggestions are high-quality translations or are required to be edited by a human translator due to low quality.

Multilinugal Workflow

A multilingual workflow is the automation of business processes by managing multilingual content. The systematic use of translation management systems, automated translation and human post-editing provides companies with an opportunity to realize large cost savings, fewer errors in documents and improved customer satisfaction.

Multi-Language Vendor

A multi-language vendor is a localization service provider that offers a wide range of languages.

Named Entity Recognition (NER)

Named entity recognition (NER), sometimes referred to as entity chunking, extraction, or identification, is the task of identifying and categorizing key information (entities) in text. An entity can be any word or series of words that consistently refers to the same thing. Every detected entity is classified into a predetermined category. For example, an NER machine learning (ML) model might detect the word “Omniscien Technologies” in a text and classify it as a “Company”.

Language Studio provides a range of tools and algorithms for Named Entity Recognition.

NER

See Named Entity Recognition

Neural Machine Translation (NMT)

Neural Machine Translation (also known as Neural MT, NMT, Deep Neural Machine Translation, Deep NMT, or DNMT) is a state-of-the-art machine translation approach that utilizes neural network techniques to predict the likelihood of a set of words in sequence. This can be a text fragment, complete sentence, or with the latest advances an entire document.

NMT is a radically different approach to solving the problem of language translation and localization that uses deep neural networks and artificial intelligence to train neural models. NMT has quickly become the dominant approach to machine translation with a major transition from SMT to NMT in just 3 years. Neural Machine Translation typically produces much higher quality translations that Statistical Machine Translation approaches, with better fluency and adequacy.

See the Omniscien FAQ – What is Neural Machine Translation (NMT)?

Neural Network

Neural networks are a subset of machine learning that mimic the way that biological neurons signal to one another. They are comprised of node layers, containing an input layer, one or more hidden layers, and an output layer. Each node has an associated weight and threshold. If the output of any individual node is above the specified threshold value, that node is activated, sending data to the next layer of the network.

NMT

PEMT

See Post-Edited MT

Pivot Translation / Pivot Language Pairs

Language pair pivoting uses a middle language (i.e. German – English … English – French) to bridge the gap between language pairs. This technique is used by many translation tools such as Google Translate, Microsoft Translate and DeepL. However, any time there is a pivot language in the middle, errors in translation are amplified. Omniscien tools translate directly between 600+ language pair combinations, in addition to pivoting for lower resource language pairs.

Post-Edited MT / Post-Edited Machine Translation

In Post-Edited Machine Translation, humans review the work of machines. This process allows us to capture the efficiency of MT while ensuring the accuracy of manual translation. Machine Translation Software takes care of the task of translation. It captures the efficiency of MT while ensuring the accuracy of manual translation. Human translators review and edit each sentence before it’s published. Post edited machine translation content is very good for improving an existing Custom MT Engine as it directly addresses errors that the engine makes.

Pseudo-Localization

Pseudo-localization is a process used to some extent in any software development project. It’s used to verify that the user interface is capable of containing the translated strings (length) and to discover possible internationalization issues. Pseudo-localization usually starts with Windows language support as well as other non-translatable elements such as code comments, which helps you understand what types of string length restrictions there are. Then you continue by adding English into the application so that it actually displays some English text so this helps you to determine what content will look like if translated into another language.

Proofreading

Proofreading is checking a translated text to identify and correct spelling, grammar, syntax, coherency, and other errors before it is printed or published. The proofreader reviews the document for all potential errors and ensures that the final version does not contain careless mistakes.

QA

Quality Assurance

Quality assurance (QA) is the process of determining whether a product or service meets specified localization and internationalization requirements. Quality assurance includes testing, auditing and inspecting translation projects to ensure that they were done correctly, but it also refers to reviews by the project manager and customer representative to ensure that the translation will do its job.

Quality Estimation

RBMT

Reference Material

Reference material is used to help translator adhere to preferred writing styles and terminology. Reference material may include company glossaries, dictionaries, style guides, prior translations, web sites, product descriptions, customer feedback, photos/videos etc.

Right-To-Left (RTL)

Right To Left (RTL) languages are languages that are written from Right to Left, like: Arabic, Farsi, Urdu and Hebrew. They use a different set of letters than Latin Counterparts. Adding RTL support to applications can be quite challenging and very expensive to rectify if not planned for in advance.

RTL

See Right-To-Left

Rule-Based Machine Translation (RBMT)

Rules-Based Machine Translation (RBMT) systems were the first commercial machine translation systems and are based on linguistic rules that allow the words to be put in different places and to have different meanings depending on the context. RBMT technology applies to large collections of linguistic rules in three different phases: analysis, transfer, and generation. Rules are developed by human language experts and programmers who have deployed extensive efforts to understand and map the rules between two languages. RBMT relies on manually built translation lexicons, some of which can be edited and refined by users to improve the translation.

See Omniscien FAQ – What is Rules-Based Machine Translation (RBMT)?

Runtime Glossary

A Runtime Glossary is a set of translated terminology pairs that can be submitted to a machine transalton engine at the time of translation (runtime) with the goal of enforcing a preferred glossary. Older SMT engines had very good support for Runtime Glossaries. More modern NMT will take guidance and bias towards a runtime glossary but may not always adopt it. This is an area of research that is being worked on by the Omniscien team and others in the industry and academia.

SDK

Segment

A segment is a coherent, meaningful chunk of text that represents a cognitive unit. It can be a clause, phrase, or sentence. Typically, the segments that share similar structure and subject matter are grouped into source texts and compiled into a Translation Memory (TM). When a segment from the TM matches those from an unknown text, it is possible to translate the unknown text automatically without the need for human intervention.

Segmentation Rules eXchange

SRX provides a common way to describe how to segment text for translation and other language-related processes. It was created when it was realized that TMX was less useful than expected in certain instances due to differences in how tools segment text. SRX is intended to enhance the TMX standard so that translation memory (TM) data that is exchanged between applications can be used more effectively. Having the segmentation rules available that were used when a TM was created increases the usefulness of the TM data.

Segmentation

Segmentation is the process of splitting translations into smaller, relevant chunks. It makes translators more efficient by providing them with smaller pieces to work on, makes translation memory richer and improves the localization experience. One of the most common types of segmentation is Sentence Segmentation, where a body of text is segmented into units delimited by sentence boundaries.

Sentence-Level Machine Translation

Sentence-level machine translation refers to a type of machine translation that translates individual sentences or phrases in isolation, without considering the context or relationships between sentences. This type of translation is typically used for shorter texts, such as emails, chat messages, and social media posts, where the overall structure of the document is less important.

Phrase-based machine translation systems are the most common type of sentence-level machine translation systems. These systems break the source text down into individual phrases, and then translate each phrase independently using a database of pre-translated phrases. The translated phrases are then reassembled to form the final translation.

While sentence-level machine translation can be quick and convenient for short texts, it can often produce translations that are less accurate and less coherent than document-level machine translation, as the context and relationships between sentences are not taken into account. In contrast, document-level machine translation systems consider the overall context and structure of the document, which can lead to more accurate and coherent translations.x

Sentence Segmentation

Sentence segmentation is the process of dividing a written text into individual sentences. This is an important step in natural language processing, as it allows a computer to understand the structure and meaning of a piece of text by analyzing the relationships between the words and phrases in each sentence.

In English, sentence segmentation is typically performed by identifying the punctuation marks that signal the end of a sentence, such as periods, exclamation points, and question marks. However, it can be more complex than this, as there are often situations where punctuation does not clearly indicate the end of a sentence, or where multiple sentences are combined into a single string of text without appropriate punctuation. In these cases, additional techniques may be used to identify the boundaries between sentences, such as analyzing the structure of the text or using machine learning algorithms to identify patterns in the way sentences are written.

Sentence Alignment

Sentence alignment, also known as sentence pairing, is the task of aligning sentences in a document pair. The aligned sentence pairs express the same meaning in different languages. The most common alignment is 1-to-1 alignment. However, there is a significant presence of complex alignment relationships, including 1-to-0, 0-to-1 and many-to-many cases, such as 1-to-2, 1-to-3 and 2-to-2.

Language Studio provides advanced tools for aligning sentences and extracting clean Translation Memories – previous translations between two documents are automatically matched between source and target language. Additional data cleaning tools fine tune the translations and provide confidence scores to determine the best quality translations and which translations are most accurate.

Simship / Simultaneous Shipping

Simultaneous shipping is when content or a product is released for the domestic market and the foreign market at the same time. The term has become more standard in recent years, since it is now increasingly common for major game developers to release their products in Korea and Japan, as well as North America and Europe.

SLV

Single Language Vendor (SLV)

A Single Language Vendor is a localization service provider with only one or a restricted number of languages. A SLV is useful for companies that don’t have the resources or market reach to translate a product into multiple languages.

Source File

A source file is a file that contains the original strings that will be used as a source language for translations. This can be any type of text file and should not be confused with the master or target files, which are translated into multiple languages.

Source Language

The source language is the original language of a text that is translated into another language. It may also be called the source tongue or mother tongue.

Software Development Kit

A SDK (Software Development Kit) is a set of tools, libraries, relevant documentation, code samples, processes and guides that allow developers to create software applications on a specific platform. The goal of an SDK is to make it easier for programmers working with the same technology stack to connect and collaborate with each other.

Statistical Machine Translation (SMT)

Statistical Machine Translation (SMT) learns how to translate by analyzing existing human translations (known as bilingual text corpora). In contrast to the Rules-Based Machine Translation (RBMT) approach that is usually word-based, most modern SMT systems are phrase-based and assemble translations using overlap phrases. In phrase-based translation, the aim is to reduce the restrictions of word-based translation by translating whole sequences of words, where the lengths may differ. The sequences of words are called phrases, but typically are not linguistic phrases, but phrases found using statistical methods from bilingual text corpora.

Stock Engines

See Industry Domains

Style Guide

A style guide indicates the elements of tone, voice, and word choice that should be observed in each target language. This guide helps translators create content that maintains a consistent brand voice. With a style guide in hand, you can be sure that your entire website, application, or elearning suite conveys your brand’s characteristic tone, whether formal, edgy, or business casual.

T9n

See Translation

Target Language

The target language is the language into which your translation will be or has been made.

Termbase

A termbase is a database containing terminology and related information. A termbase contains three types of data: the terms, their descriptions and synonyms from different languages. Most termbases are multilingual and contain terminology data in a range of different languages. A termbase can also be referred to as a Localization Glossary. However, unlike this blog post, it is in a specialized XML format such as Term Base Exchange (TBX).

Term Extraction

Term extraction analyzes a given text or corpus and identifies relevant term candidates within their context. It is the starting point of all terminology management tasks, and is usually followed by the elimination of inconsistencies. Term extraction identifies relevant terms in documents or corpora, and is the first step to building a successful terminology database. Term extraction improves quality, reduces costs, and improves time to market.

TBX

TEP

TermBase eXchange (TBX)

TermBase eXchange (TBX) is an international standard for the representation of structured concept-oriented terminological data, co-published by ISO and the Localization Industry Standards Association. A TBX (TermBase eXchange) file is an XML-based format that allows for the interchange of terminology data, including detailed lexical information

See ISO 30042:2008 – Systems to manage terminology, knowledge and content — TermBase eXchange (TBX)

Terminology Management

Terminology Management is the practice of proactively maintaining dictionaries and glossaries to improve consistency within an organization. Terms are organized and controlled based on accepted standards, with a clear set of guidelines dictating their use in order to control vocabulary usage. Terminology management helps ensure correct and consistent use of terms throughout the writing or translation process. It can be used to manage any content where accuracy is important, including marketing materials, legal documents, software specifications and more.

TM

TMX

Translation Memory eXchange (TMX) is an XML specification that allows computer-aided translation and localization tools to exchange translation memory data. Translation memory data is shared between CAT tools, translation vendors, and localization platforms in a common format that can be used to synchronize content between these systems. This standard has greatly been replaced by the XLIFF standard.

Translation Memory eXchange (TMX) is a translation memory format based on XML designed to enable the exchange of translation memory data between CAT tools, translation vendors, and localization platforms.

OSCAR (Open Standards for Container/Content Allowing Re-use), a Localisation Industry Standards Association (LISA) special interest group, has developed and maintained TMX until 2007. The standards were later moved under a Creative Commons license.

Training Data

Training data is used to teach Machine Learning algorithms how to process data. In the case of machine translation, the training data consists of millions or even billions of bilingual parallel translated sentences that have been human translated from one language to another. This is also referred to as parallel coropra.

Translatability

Translatability is the degree to which a text can be translated into another language. How much of the meaning remains valid in the target language depends on both how well each word choice works in that context and whether thoughts are expressed clearly.

Transcreation

Transcreation is the process of re-developing or adapting content from one culture to another while transferring its meaning and maintaining its intent, style, and voice. This process often required skilled translators fluent in the source and target languages and need multiple rounds of proofreading. For example, in a product description written in English to be translated into Spanish, the translator would apply his or her knowledge base in both English and Spanish to transfer meaning as accurately as possible while maintaining style and voice of the original language.

Transcription

With audio transcription, you’ll get a written text of what’s being said in the recording. This can be anything from a simple interview recording to large documents that are not easily searchable or could be used to build personal profiles or make reports.

Translation

Translation is the act of converting the meaning of a text from one language into another, accurately reproducing not only the meaning but also the tone. The process of translation is applied by human translators, who can be bilingual and multilingual, professional or amateur. They are often employed by large translation companies or by publishing houses to translate documents from a foreign language into one’s native language.

In recent years, machine translation has become a standard part of the overall translation process for most translation workflows and Language Service Providers.

Translate, Edit, Proof (TEP)

TEP is an acronym for translate-edit-proof, which are the three most common steps of a translation project. Translation processes can vary between projects, but they always follow TEP in some order. For example, a translation agency may require editors to use CAT tools like Trados or memoQ to pre-translate and proofread files before they are sent to translators.

Translation Agency

Translation Management System (TMS)

A translation management system (TMS) is a program that helps localization teams work together more efficiently and make the translation process faster, easier, and more organized. Choosing the right TMS for your business will help make the localization process easier and more efficient. Translation Management Systems have a powerful Graphical User Interface that will assist professional translations in the translation and machine translation post editing tasks. Some TMS vendors have created a web-based Graphical Users Interface that enables the entire localization process to be accessible from the web without any specialized tools installed on each persons computer.

Examples include Across, MemSource, memoQ, XTM, Trados Studio, etc.

Translation Memory (TM)

A translation memory is a database of frequently translated words and phrases that can save you time by suggesting translations when you need them. Translation memory and machine translations combined are the most valuable tools for saving time and avoiding translation mistakes. In simplistic terms, a Translation Memory is a collection of previous translations that were created by human translators.

The most common formats are TMX and XLIFF. These formats are used to move content between systems, users, translators, vendors, and as input when training a machine translation system.

Translation Memory Leverage

Translation memory leverage is the amount of text a given source has previously stored in its translation memory that is based on previous translations create by professional translators. It gives a better estimation of how much new translation work needs to be done. Translation memory leverage can be calculated using the simple equation TLU / (Lexical Covered Words / Translation Probability) x 100. If you are working with a Language Service Provider, make sure to discuss Translation Memory Leverage with them. Leveraging Translation Memories can streamline the entire localization process and greatly reduce time and costs.

Unicode

Unicode is a standard that is able to represent all characters for all alphabets in one character set. It solves the issue of displaying any character or language on one page. Unicode only provides the encoding and you would still need fonts to display the text correctly.

UTF-8 / UTF-16 / UTF-32

UTF-8 is a multibyte character encoding capable of representing all the characters of the Unicode (ISO 10646) standard. It uses one to four bytes for each character, whereas most other encodings use one or two bytes per character. Each code position in UTF-8 represents at least 4 bits, but not more than 6.

UTF-8 is the most dominant method, but UTF-16 is becoming more popular due to errors being detected more easily while decoding with UTF-8.

Word Count

A word count is a calculation of the amount of words in a document. It is generally used to determine an estimate of the price for a translation. Translation agencies have sophisticated tools that send data through connectors to translation memories, as well as workflow and project management programs, which they use to calculate word counts on a daily basis.8.

XLIFF

XLIFF (XML Localization Interchange File Format) is an XML specification that allows computer-aided translation and localization tools to exchange translation memory data. Translation memory data is shared between CAT tools, translation vendors, and localization platforms in a common format that can be used to synchronize content between these systems. As a Language Service Provider, this is one of the most important file formats. Some vendors of CAT tools such as memoQ and SDL (now RWS) have their own variants of XLIFF such as MQXLIFF and SDLXLIFF.

It consists of one or more translation units, which are stitched together to form a single file. XML Localization Interchange File Format (XLIFF) is a text-based specification that defines how localization data is stored in an XML document and how to exchange this data between systems. An XLIFF file will be generated from a source document and the source language broken out into source language segments. These will then be translated directly by human translators or by machine translation to a target language. An XLIFF file is an ideal transport format between multiple Computer Assisted Translation tools and translation editing software. When moving files between a localization project and a translator, the files can be easily attached and transmitted by email or other automated tools and then opened in a wide variety of translation editing platforms and tools. One an human translator has completed their tasks such as Machine Translation Post-Editing (MTPE), then the file can be archived or delivered to a client a Translation Memory, or uploaded for use in Machine Learning and Custom Machine Translation Engines.

XML

XML stands for eXtensible Markup Language, a language that describes the structure of other languages. XML is useful because it allows you to exchange information between programs and systems that have different formats, which is important when data needs to be shared between different applications or systems.”

XML is the underlying data structure used in many programming languages and applications to store and exchange data. XML supports the definition of new tags, so it can be customized to the characteristics of various hardware and software platforms.

Related Links

Pages